a small wearable / open research project

Sentinels.

A device that listens to the soundscape and gently maintains it — emitting calibrated bird-band signals at the volume of quiet conversation, so the brainstem can stand down.

— ♪ —

§ 01 — The hypothesis

A circuit that doesn't know you live in a city.

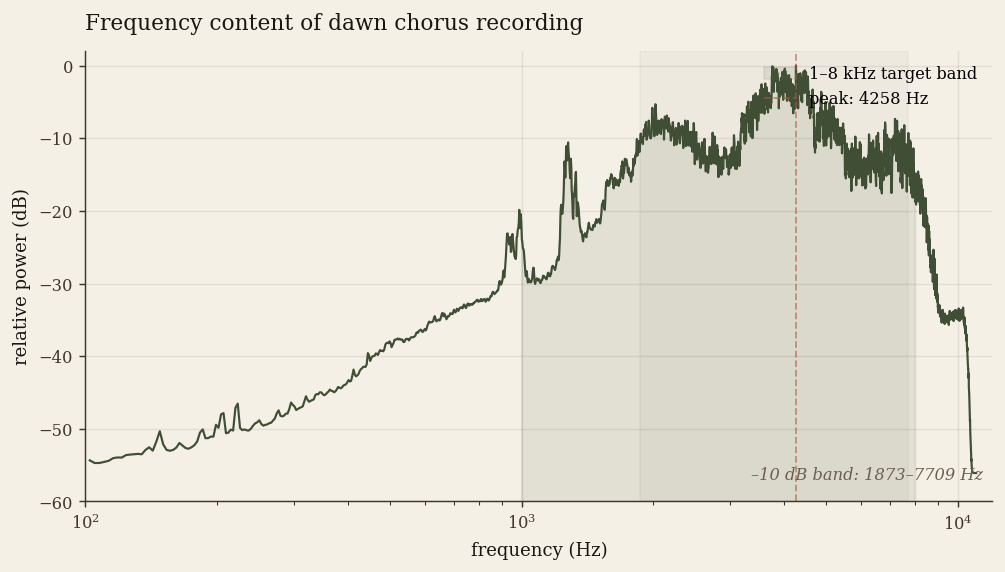

Mammals appear to use continuous ambient soundscape as a proxy for no large predator currently moving through the environment. Birdsong, in roughly the 1–8 kHz band at low volume, is a canonical signal that this monitoring system seems tuned to.

Recent studies provide reasonable evidence that even recorded birdsong produces measurable reductions in anxiety, with effects persisting for hours after the sound stops. The response appears to be volume-sensitive: alpha activity rises at quiet conversational levels and inverts above about sixty decibels.

A small wearable could provide this signal continuously and unobtrusively in environments where real birds are absent — offices, public transit, hospitals, dense urban interiors. That's the bet this project exists to test.

Most of what gets labelled mental fatigue is hypervigilance running in the background.

— ♪ —

§ 02 — A demonstration, in miniature

Listen, quietly.

Below is a small Web Audio sketch of the kind of soundscape the device would emit. It is procedurally synthesised — the device should never loop a recording. Set your volume low first, tap Listen, and the chorus will keep going as you read. The corner indicator above pauses from anywhere.

A procedurally-assembled chorus

A UK garden cohort — robin, blackbird, song thrush, great tit, wren, blue tit, dunnock, plus background contact calls — modelled from acoustic-parameter literature with stateful scheduling: songs avoid overlap with each other, and one bird's song mildly elevates the chance of a neighbour responding. Toggle species above to hear them in isolation. Switch region to compare with an Aotearoa New Zealand native-bush cohort. See audio/voices.md for sources.

— ♪ —

§ 02½ — Does it actually sound like a chorus?

Quantitative, not aesthetic.

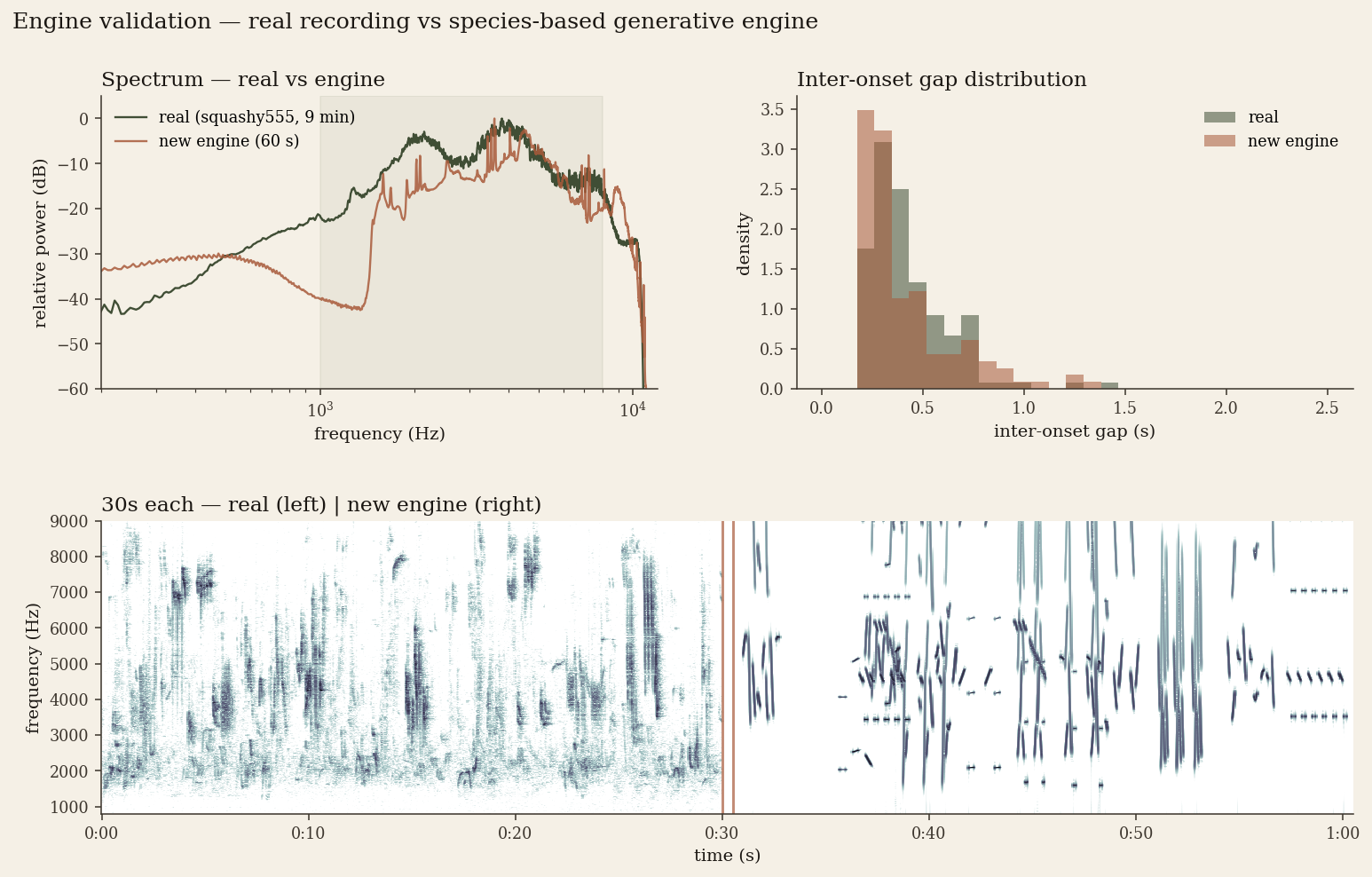

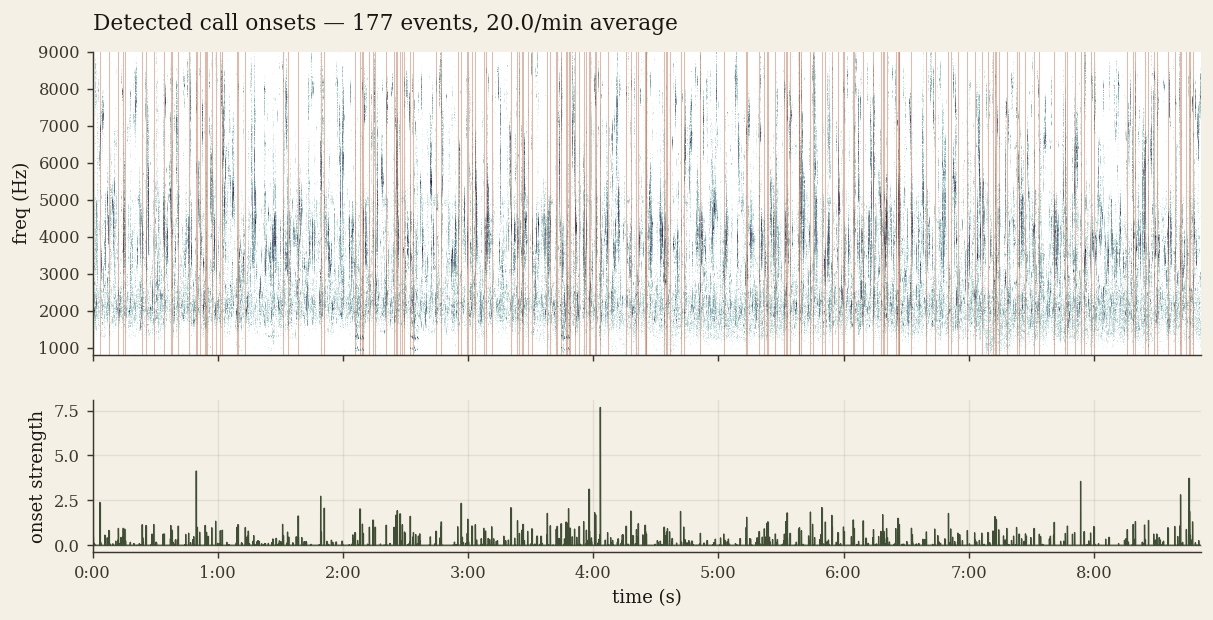

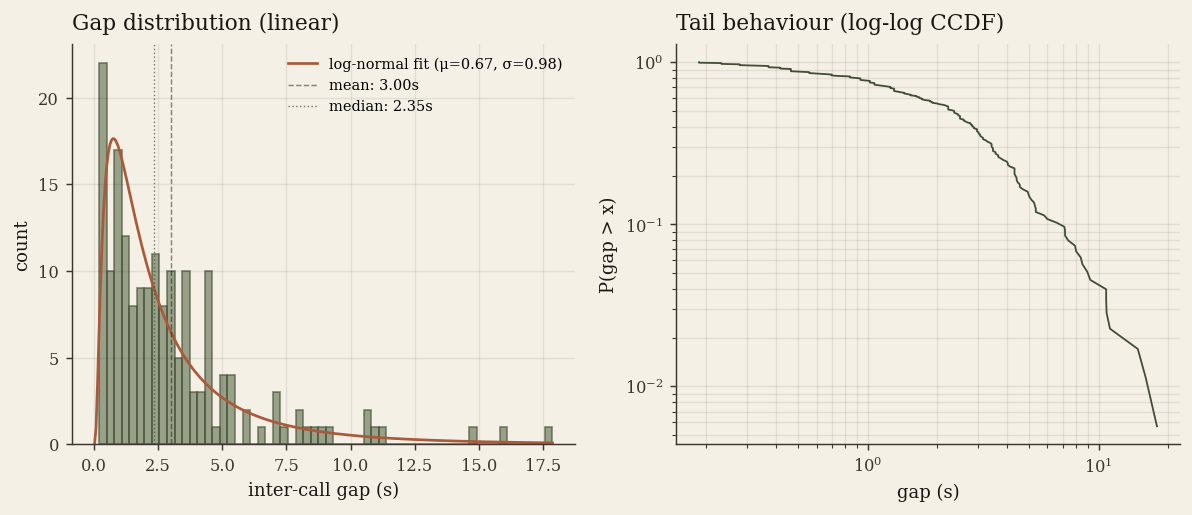

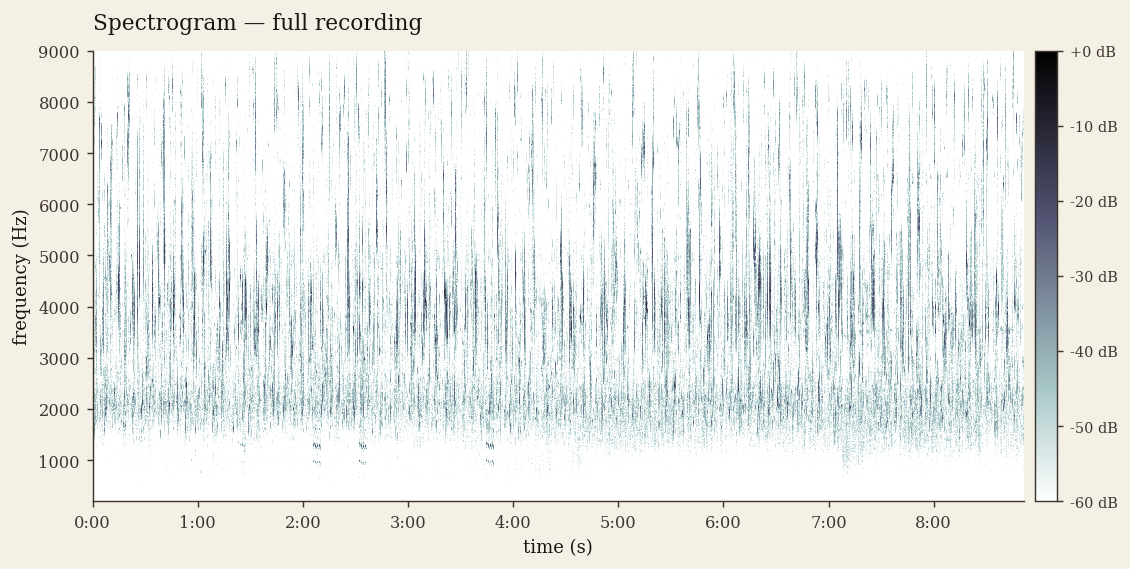

The engine above is procedural, not a recording, so the honest question is whether it lands near a real dawn chorus. We rendered sixty seconds of the engine to an offline audio context — at mid-high density, to match the reference's chorus intensity — and ran the same analysis pipeline over it as over a CC0 reference recording (squashy555, Burton-on-Trent, May 2021).

Eight of nine temporal and spectral metrics match within ~15%. The exception is density coefficient of variation: the reference chorus is remarkably steady across 30-second windows, while the engine is ~2.5× more bursty — visible as cluster-and-gap structure in the spectrogram below. A tuning issue, not a structural one.

Full method, numbers, and honest discussion of what doesn't match in audio/analysis/validation.md. Pipeline is reproducible: audio/analysis/README.md.

— ♪ —

§ 03 — Mechanism

Three things downregulate simultaneously.

When the brain registers ambient acoustic safety, the amygdala settles, the parasympathetic nervous system takes over from the sympathetic, and the rumination circuit in the posterior cingulate cortex quiets. Heart-rate variability rises; cortisol drops. The mood lift outlasts the sound.

This is the broad shape of the nature-restoration literature. The narrower, more interesting question — and the one this project is built around — is whether a small device emitting the right kind of signal at the right volume can engage the same response in environments where real birds are absent.

- Frequency

- 1,000–8,000 Hz — where temperate-zone bird vocalisation lives

- Volume

- 45–55 dB(A) — above sixty, the response inverts

- Continuity

- Stochastic, never looped — habituation is the failure mode

- Presence

- Heard but not foregrounded — available to be ignored

— ♪ —

§ 04 — What's in the project

The vision, taken seriously.

Six documents form the spine of the work: the hardware design, the firmware that drives it, the research it's grounded in, the experimental methodology that would either support or falsify the hypothesis, the bioacoustic model behind the audio engine, and the analysis confirming that engine matches a real chorus. This is a personal-research project, not a product — and that's reflected in what it commits to.

The device

Pendant or open-ear, around twenty pounds in components. ESP32-S3, I²S DAC, MEMS mic for ambient calibration, optional light sensor for circadian behaviour. Bill of materials, form-factor trade-offs, the acoustic constraints the design has to respect.

Read the hardware notes § 04.2 — FirmwareProcedural audio

The technical bet, expanded. A small library of stems, a Scene Director that decides what the soundscape should be, a Voice Allocator that schedules calls with realistic gap timing. Optional bird-aware mode: real birds make the device defer.

Read the firmware notes § 04.3 — ResearchThe evidence

Stobbe et al. (2022), Hammoud et al. (2022), Yu et al. (2025), and the broader nature-restoration literature — with honest notes on what each paper actually shows, what it doesn't, and where the popular narrative outruns the science.

Read the research notes § 04.4 — MethodologyHow we'd know

A four-week n=1 self-experiment with sealed schedules, a small pre-registered crossover pilot with a sham control, and a list of results that would falsify the project. Anything that calls itself a wellness device and ships without a falsification plan is a placebo.

Read the methodologySpecies & voices

The bioacoustic model behind the demo above: a UK garden cohort of eight species built from four call-type templates (continuous warble, motif-shrill, repeating phrase, trill), each grounded in citable literature. Adjacent notes on source recordings and a looping recipe for field-recording pilots.

Read the voice modelNumbers, not vibes

The engine rendered offline and compared to a reference dawn-chorus recording on onset density, gap distribution, averaged spectrum, and spectrogram — eight of nine metrics within ~15%, with the burstiness exception documented. Earlier tuning report documents the changes that got it there; analysis pipeline is reproducible.

Read the validation— ♪ —

§ 05 — Where we have to be careful

What the science does not say.

The behavioural and EEG effects of birdsong on stress markers are real and replicated. The specific evolutionary-neuroethological story — the “200-million-year-old predator-detection circuit” — is plausible narrative, not established neuroscience.

Wellness-coded interventions are also notoriously susceptible to placebo, novelty, and reporting-bias effects. The methodology takes this seriously.

Not a medical device. Not intended to treat, diagnose, or cure anything. No efficacy claims should be inferred from the existence of this site. The current state of the project is a falsifiable hypothesis and a clear plan to test it.

Negative results, if we find them, will be published as readily as positive ones.